Ohjelmistopalvelut

Yrityksille

Tuotteet

Rakenna tekoälyagentteja

Turvallisuus

Portfolio

Palkkaa Kehittäjiä

Palkkaa Kehittäjiä

Get Senior Engineers Straight To Your Inbox

Every month we send out our top new engineers in our network who are looking for work, be the first to get informed when top engineers become available

At Slashdev, we connect top-tier software engineers with innovative companies. Our network includes the most talented developers worldwide, carefully vetted to ensure exceptional quality and reliability.

Build With Us

AI Agents with RAG: A Pragmatic Reference Architecture/

AI agents with RAG: a pragmatic reference architecture

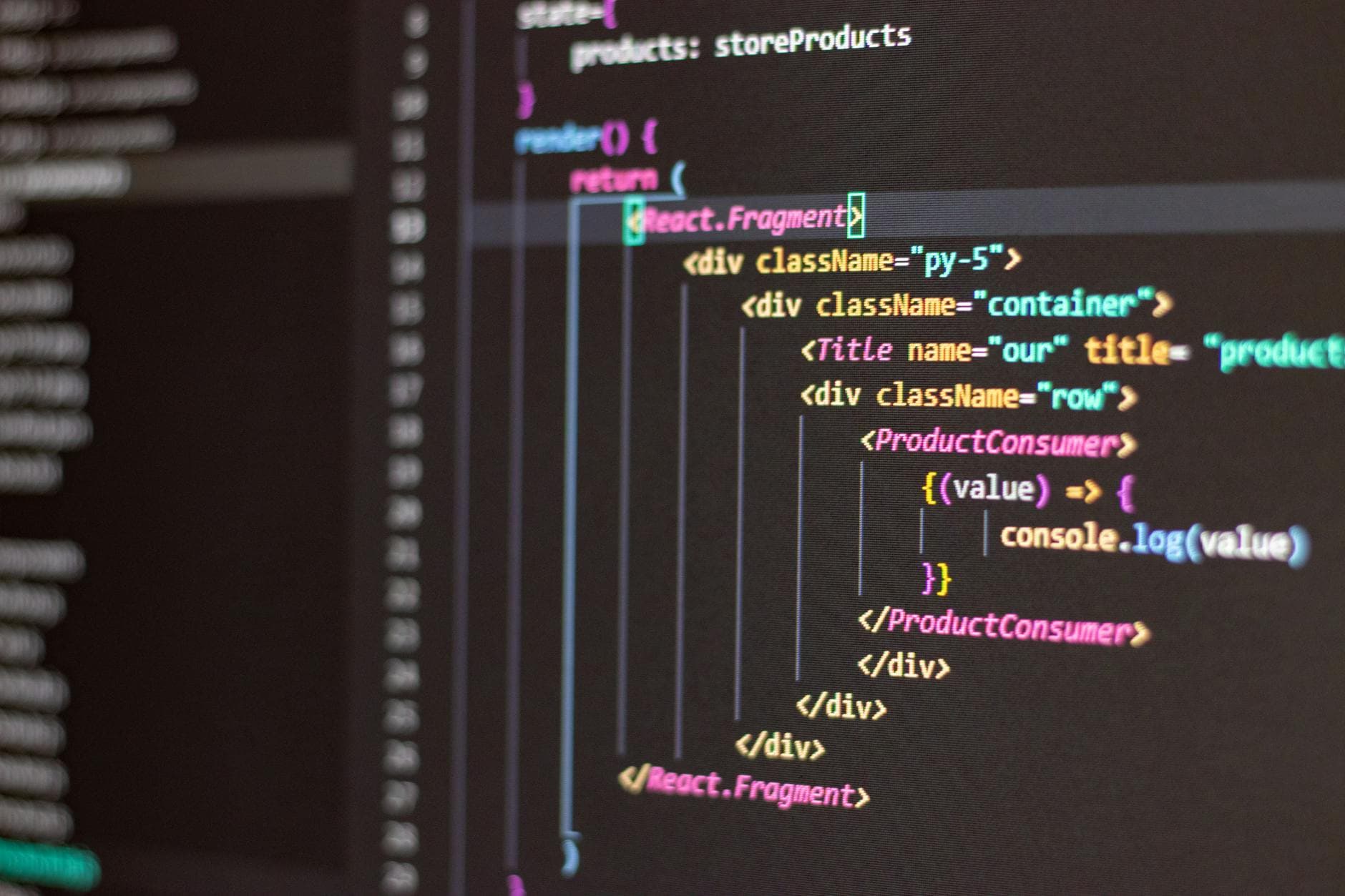

If you’re building enterprise-grade AI agents, Retrieval-Augmented Generation (RAG) is the safety rail that turns clever demos into dependable products. The most reliable systems pair a disciplined retrieval layer with tool-using agents, strict observability, and a hardened data plane. Below is a field-tested reference architecture, the tooling that consistently works in production, and the costly pitfalls to avoid when you scale from prototype to portfolio.

Retrieval layer: design for precision, recency, and control

- Hybrid search by default: combine sparse (BM25) with dense embeddings, then re-rank with a cross-encoder. Expect 10-25% lift in answer accuracy versus dense-only.

- Chunking tuned to questions: use structure-aware splits (headings, sentences), 200-600 token windows, and 10-20% overlap. Add title, section, and timestamp metadata.

- Freshness as a first-class signal: index change events, not cron dumps. Maintain recency fields and decay scoring so new policies or prices outrank stale PDFs.

- Context budget discipline: pass 3-7 top passages, trimmed to fit model limits. Summarize repetitive sections server-side to control token burn.

- Guardrails in retrieval: PII scrubbing, entitlement filtering, and tenant isolation applied before embedding and again at query time.

Agent orchestration and tooling

- Plan-execute loop: use a light planner that decides when to retrieve, call tools, or ask clarifying questions. Keep the loop count bounded to contain latency.

- Tooling essentials: a retriever, web search for out-of-corpus facts, calculators, calendar/CRM connectors, and a deterministic policy engine for approvals.

- Function calling > prompt hacks: define JSON schemas for tools, enforce strict validation, and retry with constrained candidates on schema mismatch.

- Memory model: separate short-term conversation state, long-term vector memory, and authoritative system-of-record lookups. Never conflate memory with truth.

- Latency strategy: parallelize non-dependent tool calls, prefetch likely docs, and cache embeddings plus re-ranker outputs.

Data pipelines for AI applications

- Source ingestion: CDC from SaaS and databases, plus scheduled crawls for wikis and storage buckets. Normalize to a document envelope with lineage.

- Quality gates: deduplicate near-duplicates, strip boilerplate, detect language, and reject low-text-density blobs that poison retrieval.

- Security and compliance: hash or tokenize sensitive fields; tag documents with purpose, region, and retention metadata to drive policy-aware retrieval.

- Embedding jobs: batch for cost, stream for hot updates. Version embeddings alongside models to support rollbacks and A/B tests.

- Feedback loop: capture user votes, click-through on citations, and escalation events; feed these into re-ranker fine-tunes and routing rules.

Tooling stack that actually ships

- Vector stores: pgvector for simplicity and ACID, Weaviate or Pinecone for managed scale, Milvus for self-hosted throughput. Favor hybrid indexes.

- Orchestration: LangGraph or OpenAI Assistants for fast starts; Temporal or Dagster for durable workflows and backfills.

- Re-rankers and evaluators: Cohere Rerank, bge-rerank, or E5-large. Use lightweight local models for cost-sensitive paths.

- Observability: Arize or WhyLabs for embedding drift; LangSmith or Helicone for prompt traces; Prometheus for latency and token metrics.

- Safety: Guardrails, Pydantic validators, and a classifier for policy compliance. Always log refusal causes for auditability.

Pitfalls to avoid

- Over-chunking: tiny chunks inflate false positives and context tokens. Balance granularity with semantic cohesion.

- Un-grounded answers: require citation density thresholds and penalize generations lacking corroborated sources.

- Eval leakage: mixing train and eval corpora yields rosy dashboards and painful rollouts. Snapshot datasets and freeze seeds.

- One-vector-to-rule-them-all: separate embedding models for code, legal, and product docs; route queries by domain classifier.

- Silent failures: add timeouts, circuit breakers, and dead-letter queues for tool calls and indexers; surface partial results with warnings.

- Cost sprawl: track cost per resolved task, not per token. Use caching, smaller models for re-rank, and batching for embeddings.

Measurement, safety, and governance

- North-star metrics: answer correctness, groundedness, citation coverage, first-token latency, and cost per successful action.

- Golden sets: curated queries with canonical answers and negative controls; run nightly and on every data/model change.

- RAG-specific evals: RAGAS or TruLens for faithfulness and context recall; measure retrieval hit rate per document class.

- Policy checks: pre- and post-generation filters for PII, credentials, medical/legal disclaimers, and brand tone.

- Human-in-the-loop: route low-confidence or high-risk tasks to reviewers; learn thresholds from outcomes.

Scaling playbook

- Caching tiers: query-level, passage-level, and tool-response caches with TTLs aligned to data volatility.

- Model routing: easy tasks to small models, complex ones to larger models; monitor drift and retrain re-rankers quarterly.

- Canaries and dark launches: expose agents to 1-5% of traffic with shadow comparisons before full rollout.

- Multimodal retrieval: OCR-plus-vision embeddings for scanned contracts and diagrams; store layout metadata for better grounding.

Engagement model and partner fit

If you want speed without committing to a monolith team, consider Flexible hourly development contracts that scale with milestones and risk. A seasoned product engineering partner will prototype the retrieval layer, harden Data pipelines for AI applications, and instrument rock-solid evaluations before your first exec demo. Need talent on tap? slashdev.io provides excellent remote engineers and software agency expertise for business owners and startups to realize their ideas, while your core team owns the roadmap and IP. Start small with a retrieval spine, add agent tools where they prove ROI, and expand by evidence-not optimism.